- KEY TAKEAWAYS

- HPE just announced it is acquiring Nimble Storage, in part to get Nimble’s predictive analytics.

- Analytics is becoming essential to efficient data center operations in a rapidly evolving IT environment.

- There are five critical capabilities you should demand in an advanced analytics solution.

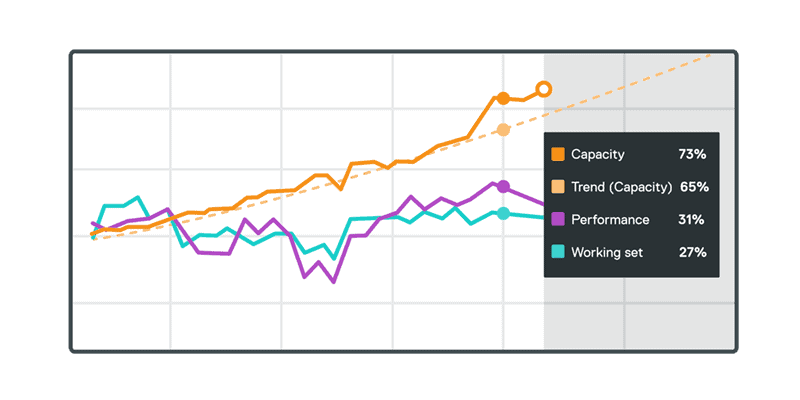

- Quick overview of the constructs that underpin Tintri Analytics. Understand how Tintri uses capacity, I/O and working set.

- With Tintri Analytics you can create application profiles — like groups of virtual machines — to understand their aggregate behavior.

- Watch Tintri predict your storage future — using up to three years of historical data to forecast your need for capacity, IO and working set.

- Use Tintri Analytics to model how changes in application quantity or behavior impact your need for storage capacity or performance.

Can Nimble Analytics predict its own future?.

There has been a lot of analysis and speculation about why HPE acquired Nimble. The official statements from both companies state that the acquisition “complements and strengthens HP’s current 3PAR products in the high-growth flash market” while the Nimble products will benefit from HPE’s broader distribution.

But a deeper look shows a different picture. While Nimble’s product line spanned from low to high end as part of its ambition to move to enterprise, it primarily sold to SMBs on the low end. In the same way, HPE has positioned 3PAR broadly from low to high end. In fact, in IDC’s price band research, no two vendors have more overlap than Nimble and HPE. With such extensive overlap in products, one can only imagine the ensuing confusion within the companies’ partner community, installed base customers, and even employees.

Both companies have also claimed that HPE’s interest in Nimble is driven by its interest in InfoSight, Nimble’s analytics tool.

At Tintri, we agree that analytics plays a hugely important role in the rapidly evolving IT landscape. As you make the journey from traditional IT to enterprise cloud, you need deeper insights than you get from conventional storage.

What should you demand from analytics

Nimble has done a great job of marketing InfoSight as cutting edge analytics. The reality is that it’s hampered by Nimble’s architecture—it can only provide meaningful numbers at the volume (not VM) level. While Nimble touts some VM-level calculations (for VMware environments only!), it’s based on correlations and algorithms, not ACTUAL VM behavior. And HPE’s line-up has the same architectural limitations, so any application of InfoSight across the broader portfolio will be similarly constrained.

If it’s analytics that YOU are after, and you want to go beyond information to deep insight, then choose a solution that offers these five capabilities:

- Hybrid analytics implementation. Analytics have to span across on-premises and cloud based environments. Not everyone can enable call-home on their arrays (based on security restrictions), therefore customers need to have real-time data and at least 30 days of history for troubleshooting and reporting purposes. Also, when troubleshooting a problem, you don’t want to wait until the analytics make it to the cloud (in 2-24 hours) before you can start troubleshooting.

- Application-level insights. Your business cares about applications, not storage metrics. You want to know the exact behaviors and needs of individual applications. That’s why analytics has to go beyond the LUN or volume level and provide insights into the behavior of individual VMs or containers. And that doesn’t mean averages or correlations applied to LUNs and volumes, but actual VM-level analytics.

- Autonomous operations. Most organizations today want to focus on deploying new applications and starting new projects. The last thing they want to do is manage infrastructure or look at analytics to make decisions. The overall implementation of analytics should enable autonomous operations such that the storage layer can take actions or automatically recommend the optimal distribution of workloads.

- Resource planning. Conventional storage analytics tools and application-level planning tools don’t correlate storage information with application information. More advanced analytics can use historical data to precisely predict your future need for capacity and performance from the application down, rather than the infrastructure up.

- Ad hoc analytics. When you need to troubleshoot, real-time analytics should provide visibility across compute, network and storage—that way you can pinpoint the root cause of a latency challenge in seconds. And when you need to plan, it’s imperative that you can use what-if models to assess the impact of changes before they are implemented

Today, Tintri Analytics already delivers the above five capabilities, and in an upcoming release, we are extending Tintri Analytics to compute as well. Getting an accurate picture of both compute and performance needs from a single tool greatly simplifies infrastructure planning.

The simple lesson is that when you’re looking at your analytics options, make sure you know exactly what you’re buying.